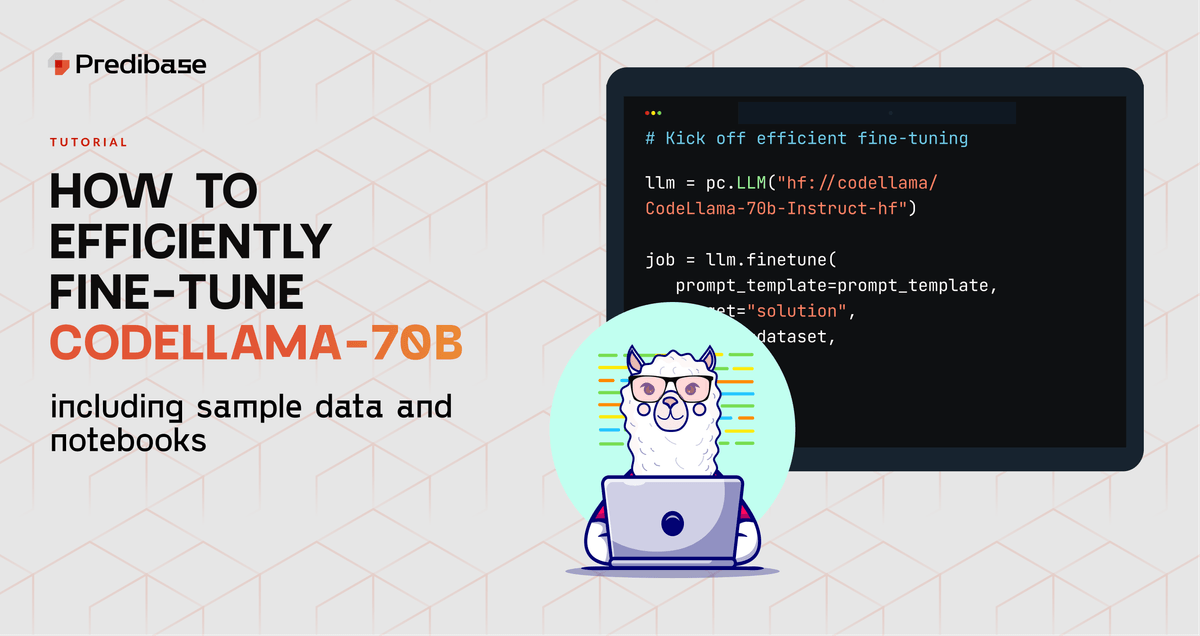

How to Efficiently Fine-Tune CodeLlama-70B-Instruct with Predibase

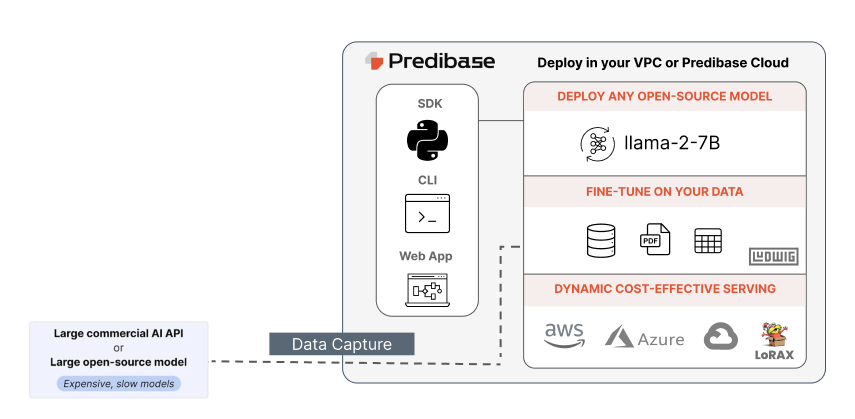

Learn how to reliably and efficiently fine-tune CodeLlama-70B in just a few lines of code with Predibase, the developer platform for fine-tuning and serving open-source LLMs. This short tutorial provides code snippets to help get you started.

How to Efficiently Fine-Tune CodeLlama-70B-Instruct with Predibase - Predibase - Predibase

Predibase on LinkedIn: Predibase: The Developers Platform for Productionizing Open-source AI

Piero Molino on LinkedIn: #ml #ai #datascience

Predibase on LinkedIn: eBook: The Definitive Guide to Fine-Tuning LLMs

Fine-tuning Llama 2 70B using PyTorch FSDP

Learn about LLM hosting with Predibase, Predibase posted on the topic

Ludwig.ai releases new release, Predibase posted on the topic

Graduate from OpenAI to Open-Source: 12 best practices for distilling smaller language models from GPT - Predibase - Predibase

Predibase on LinkedIn: How to Fine-tune LLaMa-2 on Your Data with Scalable LLM Infrastructure -…

Predibase on LinkedIn: ML Real Talk: 5 Reasons Why Adapters are the Future of LLMs

Geoffrey Angus on LinkedIn: How to Efficiently Fine-Tune CodeLlama-70B-Instruct with Predibase -…

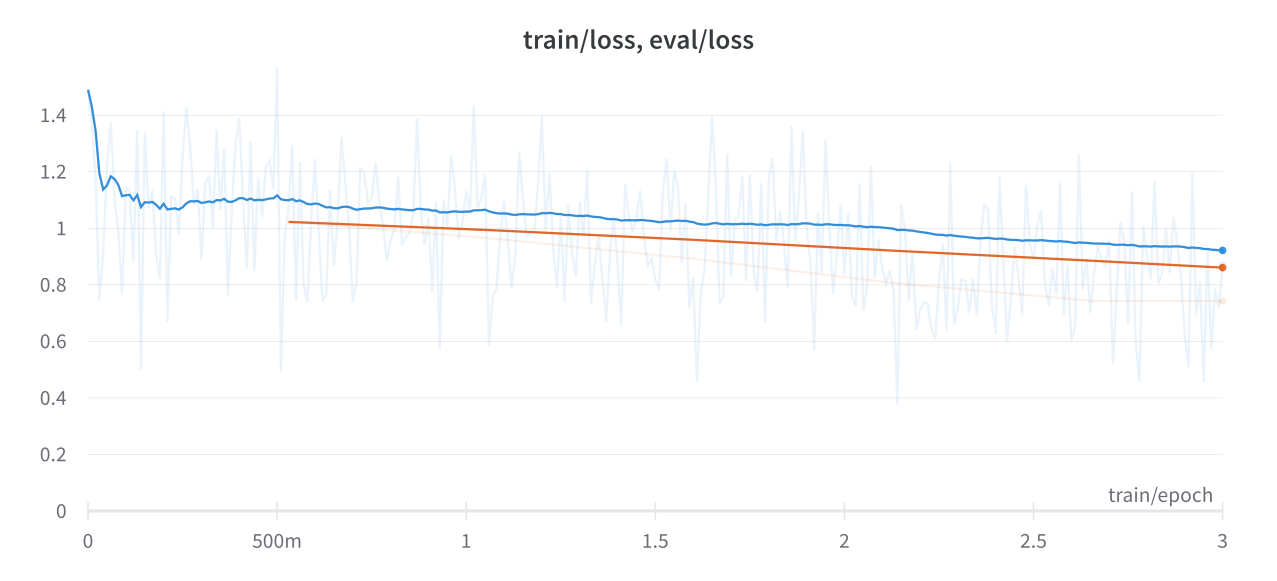

Finetuning LLaMA 70B with No-Code: Results, Methods, and Implications

Predibase on LinkedIn: How to Use LLMs on Tabular Data with TabLLM and Predibase - Predibase

Jeevanandham Venugopal on LinkedIn: How to Efficiently Fine-Tune CodeLlama-70B-Instruct with Predibase -…

Predibase on LinkedIn: #raysummit #finetune #llms